Projects

Below is a list of projects I worked on during my Masters in Robotics Engineering at WPI.

For a more up-to-date list of my projects, please see the publications page

Grasp Synthesis by employing active vision techniques

It fascinates me how something as fundamental as manipulation and grasping can be difficult for robots. For this, I aspire to pioneer innovative techniques that would facilitate smarter decision making for dextrous robotic manipulation. As a first step towards this goal, I have been working with Professor Berk Calli, WPI in the Manipulation laboratory for the past eight months. The research problem that I have been working on is utilizing active vision techniques to make a manipulator explore an object in the fewest number of steps possible. The aim is to obtain a rich enough pointcloud data to grasp an object.

A real-time implementation of the algorithm in shown below.

The robot arm successfully finds a graspable pose in a single action fed by a SVM model.

The robot arm successfully finds a graspable pose in a three actions fed by a trained SVM model.

Modified DDPG for motion planning

The high amount of time and complexity required by traditional sampling-based motion planning approaches inspired me to explore new methods that would tackle motion planning in a faster and easier way. As a step toward this, I am working on modifying the deep-deterministic policy gradient, a Reinforcement Learning algorithm, to make it solve the problem of motion planning for manipulators as my project for the Robot Dynamics course. This modification includes incorporating expert trajectories generated by BiRRT into the replay buffer and adding hindsight exploration for faster convergence.

Project team members: Yash Shukla, Mihir Deshingkar, Daniel Jeswin Nallathambi

A real-time implementation of the algorithm in shown below.

The blue circle corresponds to the start state and the green circle corrsponds to the goal state.

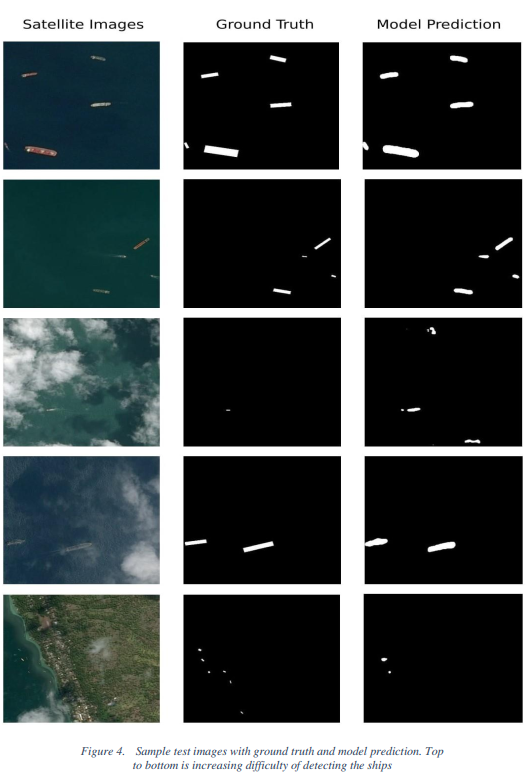

Ship Detection and Segmentation from Satellite Imagery

This study aims at segmenting instances of ships from an aerial image. Till date, no metric exists which aims at segmenting ships from aerial imagery. My approach to the problem involves cascading U-Nets for image segmentation, which is based on the idea of iterative refinement. A typical U-Net architecture consists of a convolutional neural network which acts like a decoder followed by an up-sampling deconvolutional network acting like an encoder to segment our object in question, ships.

Project team members: Yash Shukla, Bryan Inacio Rathos

Sample results from the study are displayed below.

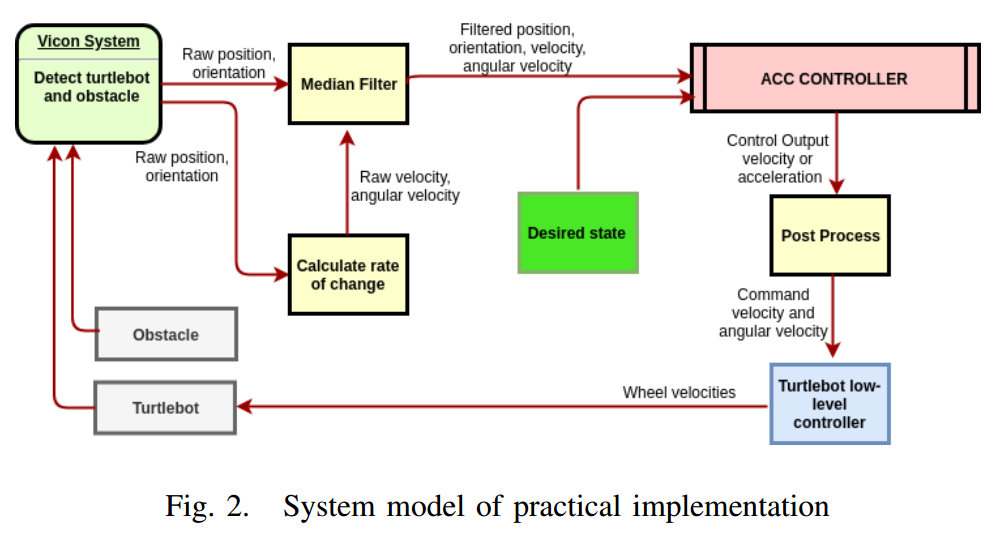

TurtleBot Trajectory Generation and Tracking

We present the implementation of one dimensional and two dimensional adaptive cruise control which follows a given desired trajectory using control lyapunov functions while satisfying constraints specified by a control barrier function to avoid running into obstacles. The Lyapunov function is treated as a soft constraint while the barrier function defines hard constraints for the robot, both of which are satisfied simultaneously using quadratic programming. The controller has been implemented on a turtlebot using Vicon system for feedback.

Project team members: Yash Shukla, Shubham Jain

The system architecture is displayed below.

A real-time implementation of the algorithm in 1D is shown below.

A real-time implementation of the algorithm in 2D is shown below.

Perception-Action Coordination

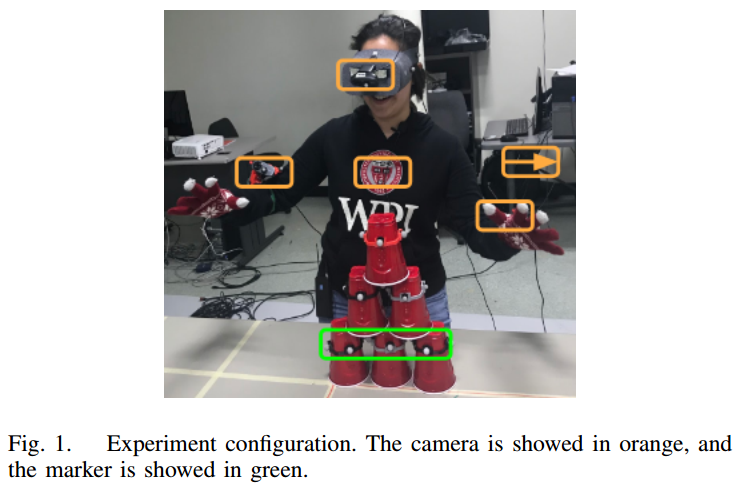

Shared autonomous task require perception collaborate with action properly. Recent approaches in robot perception follow the insight that perception is facilitated by interaction with the environment. In this paper, we design an experimental study aimed at understanding human preference in the selection and control of wearable cameras. The study help us comprehend how a perfect robot, a human, would act in situations when the human’s vision system gets restricted either by limiting the degrees of freedom, field of view available or the depth resolution available for perception.

Project team members: Yash Shukla, Alexandra Valiton, Hanqing Zhang, Hanshen Yu

Experiment configuration is displayed below.